Artificial intelligence is already changing the way we live our daily lives and interact with machines. From optimising supply chains to chatting with Amazon Alexa, artificial intelligence already has a profound impact on our society and economy. Over the coming years, that impact will only grow as the capabilities and applications of AI continue to expand.

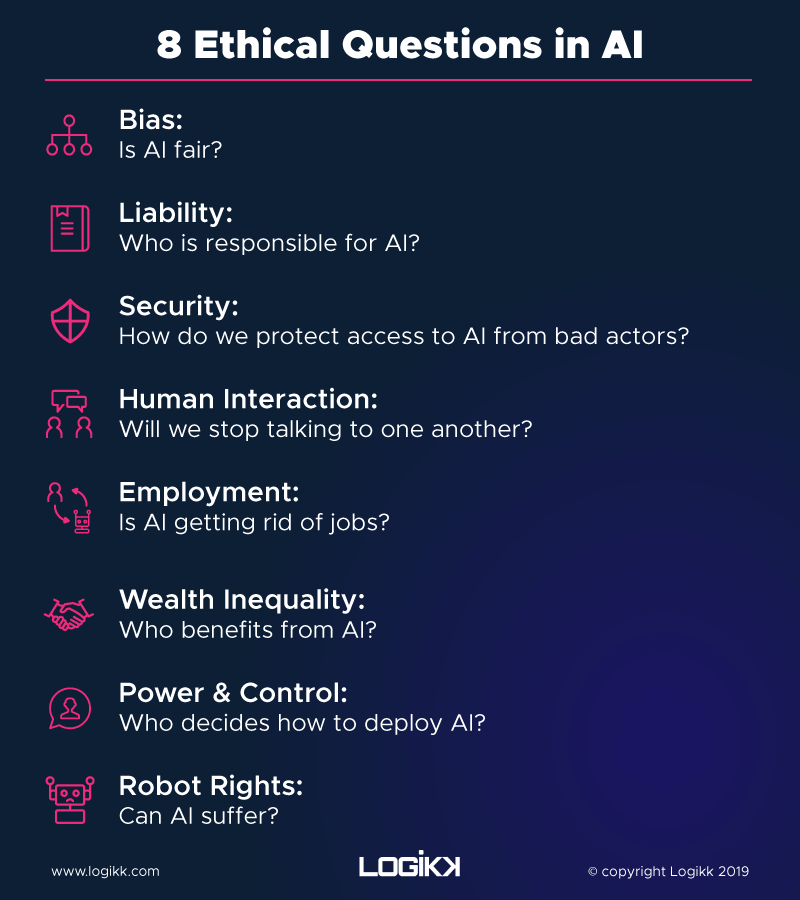

AI promises to make our lives easier and more connected than ever. However, there are serious ethical considerations to any technology that affects society so profoundly. This is especially true in the case of designing and creating intelligence that humans will interact with and trust. Experts have warned about the serious ethical dangers involved in developing AI too quickly or without proper forethought. These are the top issues keeping AI researchers up at night.

1) Bias: Is AI fair?

Bias is a well-established facet of AI (or of human intelligence, for that matter). AI takes on the biases of the dataset it learns from. This means that if researchers train an AI on data that are skewed for race, gender, education, wealth, or any other point of bias, the AI will learn that bias. For instance, an artificial intelligence application used to predict future criminals in the United States showed higher risk scores and recommended harsher actions for black people than white based on the racial bias in America’s criminal incarceration data.

Of course, the challenge with AI training is there’s no such thing as a perfect dataset. There will always be under- and overrepresentation in any sample. These are not problems that can be addressed quickly. Mitigating bias in training data and providing equal treatment from AI is a major key to developing ethical artificial intelligence.

2) Liability: Who is responsible for AI?

Last month when an Uber autonomous vehicle killed a pedestrian, it raised many ethical questions. Chief among them is “Who is responsible, and who’s to blame when something goes wrong?” One could blame the developer who wrote the code, the sensor hardware manufacturer, Uber itself, the Uber supervisor sitting in the car, or the pedestrian for crossing outside a crosswalk.

Developing AI will have errors, long-term changes, and unforeseen consequences of the technology. Since AI is so complex, determining liability isn’t trivial. This is especially true when AI has serious implications on human lives, like piloting vehicles, determining prison sentences, or automating university admissions. These decisions will affect real people for the rest of their lives. On one hand, AI may be able to handle these situations more safely and efficiently than humans. On the other hand, it’s unrealistic to expect AI will never make a mistake. Should we write that off as the cost of switching to AI systems, or should we prosecute AI developers when their models inevitably make mistakes?

3) Security: How do we protect access to AI from bad actors?

As AI becomes more powerful across our society, it will also become more dangerous as a weapon. It’s possible to imagine a scary scenario where a bad actor takes over the AI model that controls a city’s water supply, power grid, or traffic signals. More scary is the militarisation of AI, where robots learn to fight and drones can fly themselves into combat.

Cybersecurity will become more important than ever. Controlling access to the power of AI is a huge challenge and a difficult tightrope to walk. We shouldn’t centralise the benefits of AI, but we also don’t want the dangers of AI to spread. This becomes especially challenging in the coming years as AI becomes more intelligent and faster than our brains by an order of magnitude.

4) Human Interaction: Will we stop talking to one another?

An interesting ethical dilemma of AI is the decline in human interaction. Now more than any time in history it’s possible to entertain yourself at home, alone. Online shopping means you don’t ever have to go out if you don’t want to.

While most of us still have a social life, the amount of in-person interactions we have has diminished. Now, we’re content to maintain relationships via text messages and Facebook posts. In the future, AI could be a better friend to you than your closest friends. It could learn what you like and tell you what you want to hear. Many have worried that this digitisation (and perhaps eventual replacement) of human relationships is sacrificing an essential, social part of our humanity.

5) Employment: Is AI getting rid of jobs?

This is a concern that repeatedly appears in the press. It’s true that AI will be able to do some of today’s jobs better than humans. Inevitably, those people will lose their jobs, and it will take a major societal initiative to retrain those employees for new work. However, it’s likely that AI will replace jobs that were boring, menial, or unfulfilling. Individuals will be able to spend their time on more creative pursuits, and higher-level tasks. While jobs will go away, AI will also create new markets, industries, and jobs for future generations.

6) Wealth Inequality: Who benefits from AI?

The companies who are spending the most on AI development today are companies that have a lot of money to spend. A major ethical concern is AI will only serve to centralise wealth further. If an employer can lay off workers and replace them with unpaid AI, then it can generate the same amount of profit without the need to pay for employees.

Machines will create wealth more than ever in the economy of the future. Governments and corporations should start thinking now about how we redistribute that wealth so that everyone can participate in the AI-powered economy.

7) Power & Control: Who decides how to deploy AI?

Along with the centralisation of wealth comes the centralisation of power and control. The companies that control AI will have tremendous influence over how our society thinks and acts each day. Regulating the development and operation of AI applications will be critical for governments and consumers. Just as we’ve recently seen Facebook get in trouble for the influence its technology and advertising has had on society, we might also see AI regulations that codify equal opportunity for everyone and consumer data privacy.

8) Robot Rights: Can AI suffer?

A more conceptual ethical concern is whether AI can or should have rights. As a piece of computer code, it’s tempting to think that artificially intelligent systems can’t have feelings. You can get angry with Siri or Alexa without hurting their feelings. However, it’s clear that consciousness and intelligence operate on a system of reward and aversion. As artificially intelligent machines become smarter than us, we’ll want them to be our partners, not our enemies. Codifying humane treatment of machines could play a big role in that.

Ethics in AI in the coming years

Artificial intelligence is one of the most promising technological innovations in human history. It could help us solve a myriad of technical, economic, and societal problems. However, it will also come with serious drawbacks and ethical challenges. It’s important that experts and consumers alike be mindful of these questions, as they’ll determine the success and fairness of AI over the coming years.

View our prediction of the latest trends in AI for 2019

If you have enjoyed this article, please consider signing up to receive our monthly newsletter below which includes our latest articles, jobs and industry news.

View our latest selection of jobs

Get our latest articles and insight straight to your inbox

Hiring data professionals?

We engage exceptional humans for companies looking to unlock the potential of their data