Machine Learning, like most other progressive technology, is evolving rapidly. In the past two years, researchers across the industry have made strides, affecting everything from language models and image generation to 3D printing and quantum computing. The following are three of the latest developments in Machine Learning algorithms that excite us the most.

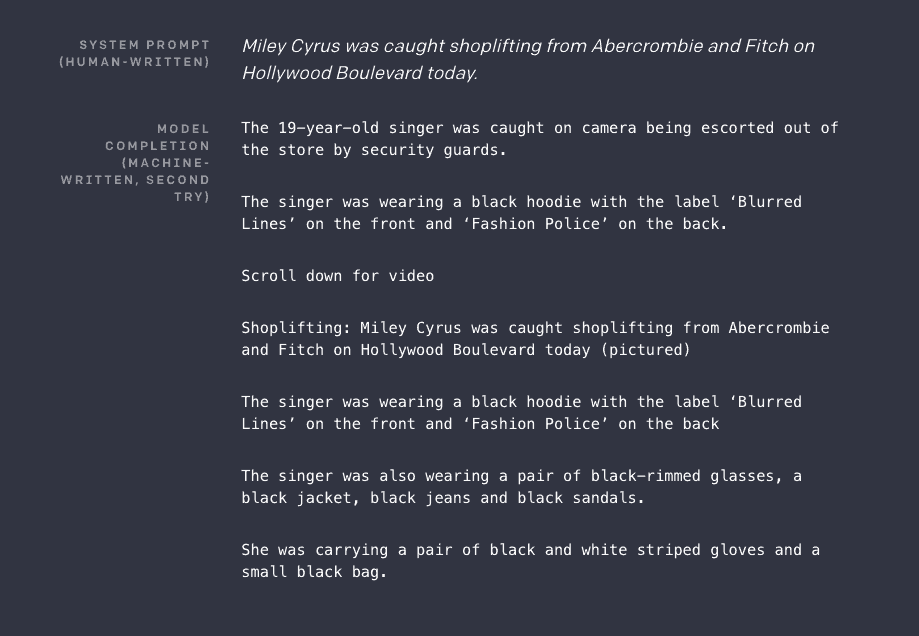

GPT-2 Can Predict Text Without Training

Launched in February, OpenAI’s GPT-2 is an unsupervised language model that can predict and write a segment of text when given a short prompt. So, if you were to input “U.S. democratic election,” for instance, it would output a few paragraphs detailing the situation. The model contains 1.5 billion parameters and utilizes a dataset of eight million webpages.

Still, the model is far from perfect. Even when researchers entered a prompt with high representation in the dataset, such as Brexit, the model produced acceptable content just 50 percent of the time. When given highly specific topics, the results were significantly worse.

The application of GPT-2 spans from the positive (writing assistance, translations, speech recognition) to the malicious (fake news, spam, impersonations). Therefore, researchers are limiting what the public can access.

Machine Learning Algorithms Leap Toward Quantum Computing

The IBM Research team, alongside the MIT-IBM Watson AI Lab, announced in March that they’ve been developing machine learning algorithms that quantum computers would be able to run, called quantum algorithms. Success in this endeavor would enable machine learning programs to tap into the enhanced feature mapping capabilities of quantum computers, significantly increasing their scalability.

Quantum algorithms effectively classify aspects of data points much more finely than their traditional machine learning counterparts. Doing so enables more robust feature mapping, improving the capabilities of all types of AI.

Unfortunately, we’re still some time away from reaping the benefits of quantum algorithms. Quantum computers are not yet stable enough to fully embrace machine learning algorithms. Because of this limitation, IBM’s accomplishment is more of a proof-of-concept than anything else. However, it’s still a significant step in the right direction.

Graph Convolutional Networks Enable Machine Learning on Graphs

Historically, machine learning algorithms have had difficulty functioning on graphs. Although they’re excellent at conveying information, the complex and irregular nature of graphs makes them poorly suited for traditional neural networks.

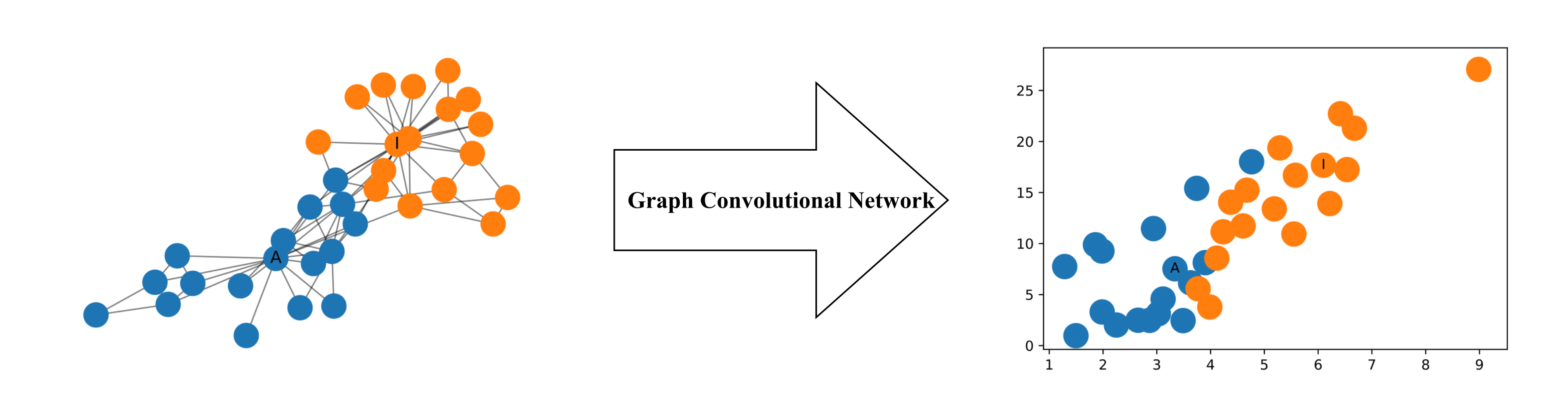

Simply, a graph convolutional network (GCN) is a neural network that allows you to implement machine learning on graphs. GCNs effectively perform computations on separate batches of nodes in a graph, rather than the entire dataset. They extract attributes of each node by examining its neighbors and aggregate this information to create graph convolutional layers.

A two-dimensional representation of nodes in a network determined by GCN. | Source: Towards Data Science

You can implement GCNs in a supervised or semi-supervised fashion to perform machine learning on almost any network. Research regarding social networks, protein interactions, and the Internet all benefit from the advancements of GCNs.

Machine Learning Algorithms: 2019 and Beyond

These three breakthroughs represent just a small subset of the numerous strides that researchers are accomplishing in machine learning. Moving forward, we’ll likely see further advancement in the development of machine learning algorithms as well as the additional discovery of unique applications of those algorithms.

We’ve only just scratched the surface of what machine learning can accomplish. Computer vision, speech recognition, fraud detection – by 2025, we’ll likely be talking about innovations across several scientific fields that aren’t even part of the discussion today.

Keep reading

Get our latest articles and insight straight to your inbox

Hiring Machine Learning Talent?

We engage exceptional humans for companies powered by AI